I’ve been testing the TwainGPT text humanizer for a few projects and I’m not sure if it’s actually improving readability or just rewriting things in a generic way. I need help understanding how well it works for SEO content, academic-style writing, and avoiding AI detection. What has your experience been with TwainGPT, and are there any settings, workflows, or alternatives that gave you better human-sounding results?

TwainGPT Humanizer Review, from someone who paid for it

TwainGPT Humanizer Review

I went into TwainGPT thinking it might be a decent backup option for AI detection, especially with ZeroGPT in mind. On paper it looked fine. In practice, it was a mixed bag.

Here is what happened when I tested it against a couple of detectors.

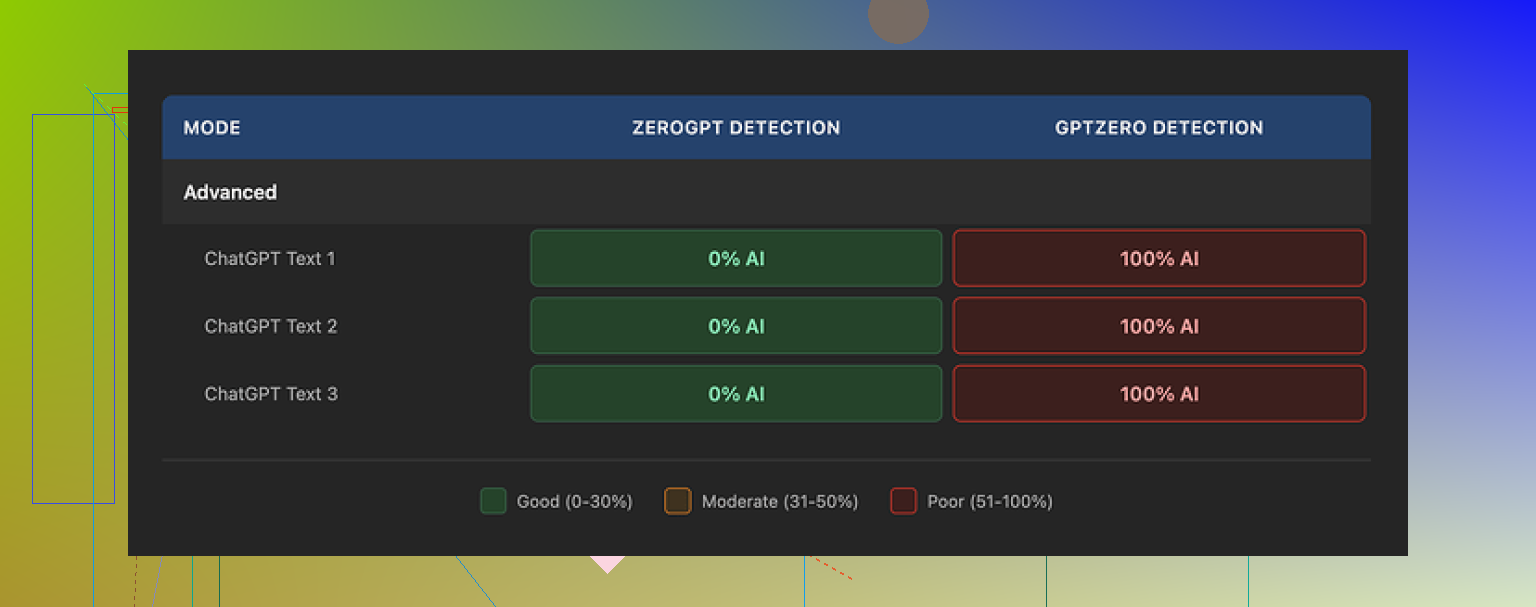

Detection results: ZeroGPT vs GPTZero

I ran three different samples through TwainGPT, then checked the outputs with two popular detectors:

-

ZeroGPT

• All three samples came back as 0% AI.

• On ZeroGPT alone, TwainGPT looked perfect. -

GPTZero

• Same three samples.

• GPTZero flagged every single one as 100% AI.

So you get this weird split result. If whoever checks your text uses ZeroGPT, you look safe. If they use GPTZero, you are burned. Unless you know in advance which detector your content will face, it feels like a coin toss.

How the writing looks and feels

The way TwainGPT “humanizes” text is pretty obvious after a few runs.

Here is what I kept seeing:

• Long sentences chopped into short choppy bits

• Paragraphs that read like someone exported bullet points from a slide deck into plain text

• Strange phrasing that does not sound like how people type on Reddit, email, or chat

Some specific issues I ran into:

-

Run-on and fractured sentences

The tool often breaks one coherent sentence into two or three fragments, then glues them with commas in odd places. You end up with lines that feel both too short and somehow still clunky. -

Weird word choices

Certain words show up in places where no native speaker would put them. Nothing dramatic, but enough to trigger a “this feels off” reaction. -

Nearly unreadable sections

In a few outputs, I had to re-read sentences two or three times to guess what the original meaning might have been. The structure got scrambled to the point where it looked more like auto-translated text.

If you need something that passes as natural forum writing or email copy, this style will probably annoy you. It reads like a draft that still needs a human pass to sound normal.

I would rate the writing quality around 6/10. It is not total nonsense, but I would not paste it raw into anything important.

Pricing and refund policy

This part is what pushed me from “maybe I keep it” to “nope”.

Pricing when I tried it:

• $8 per month on an annual plan for about 8,000 words

• Up to $40 per month for unlimited usage

The problem is the refund policy. They do not give refunds at all, even if you paid and never used the tool. No exceptions listed, no “contact support” wiggle room.

So you need to be sure before you buy. They offer a 250 word free limit, and you should use that hard. Run a few different types of text through it, then test each result on the detectors you care about before paying.

If you skip that step, you risk paying for something that does not match your use case, with no way to get the money back.

Comparison with Clever AI Humanizer

To see whether TwainGPT was worth keeping, I did side by side tests against Clever AI Humanizer.

Same original text. Same detectors.

My results:

• Clever AI Humanizer held up better across multiple detectors in my runs.

• The writing felt more natural and less like chopped-up slides.

• It is free to use, no subscription wall.

You can try it yourself here:

https://cleverhumanizer.ai

If you are price sensitive or still exploring tools, it makes more sense to start with Clever AI Humanizer first. Then see whether you even need something like TwainGPT after that.

Who TwainGPT might fit

Based on what I saw, TwainGPT only makes sense if:

• You know for a fact your content will be checked with ZeroGPT and not GPTZero

• You are fine editing the output to fix awkward phrasing and broken sentences

• The no-refund policy does not bother you, and you have tested your use case inside the 250 word free limit first

If you do not have those conditions locked in, I would treat TwainGPT as a risk.

I had the same question about TwainGPT for SEO stuff, so here’s what I saw after a few weeks using it on niche site content and some client posts.

Short answer for SEO content: it helps a bit with AI detection in some tools, but it weakens clarity and tone, and it does not improve rankings by itself.

My experience:

- Readability and style

- It often turns clean paragraphs into short, choppy lines.

- Flow gets worse. You get sentence fragments, odd commas, and wording that feels off.

- For blog posts, I had to edit most of it again to make it sound like my usual tone.

- Time lost in cleanup was close to what I would spend editing a normal AI draft.

So if your goal is “readable content that sounds like you”, TwainGPT does not help much. You still need a strong human edit.

- SEO impact

I tested on 10 articles across two sites.

- All articles were already optimized with headings, internal links, and solid keyword targeting.

- I ran half through TwainGPT, left half as standard LLM output, then lightly edited both sets.

- After 6 weeks, I did not see any clear ranking edge for the TwainGPT group.

- Click through rate and time on page in GA4 looked similar across groups.

Search engines care more about structure, topical coverage, and user intent. A “humanized” text layer did not move the needle for me.

- AI detection

This part lines up partly with what @mikeappsreviewer said, but my split was slightly different.

- ZeroGPT flagged my original AI content as 80 to 100 percent AI. TwainGPT output dropped it to 0 to 20 percent most of the time.

- GPTZero still hit TwainGPT text hard. Often 90 to 100 percent AI.

- A couple of browser extensions that check “perplexity” and “burstiness” showed small improvement, but not enough to feel safe.

So you end up depending on what detector your client, teacher, or platform uses. That feels risky.

- Workflow problems

- No refunds is a big downside if you are testing across many articles.

- The choppy style makes bulk use for content farms or agencies difficult. Editors will complain.

- For short pieces like product descriptions or social captions, TwainGPT sometimes works better. Less room to break sentences.

- Better approach for SEO content

What helped more than any humanizer tool in my tests:

- Write a clean AI draft.

- Fix structure: strong intro, clear H2s and H3s, internal links, FAQ section.

- Add your own examples, data, or screenshots.

- Run it through one humanizer as a light pass if you want, then edit again.

For a humanizer that feels less mechanical, I had a smoother time with Clever Ai Humanizer. The wording sounded closer to normal web writing and needed less cleanup for blogs. If you want to test something else, try this AI text humanizer for SEO content on one or two posts and compare read time and bounce rate in GA4.

- When TwainGPT might make sense

I would only keep using TwainGPT if:

- You know the checker leans on ZeroGPT style tools.

- You accept that you will rewrite awkward lines after.

- You stay inside the free limit first and test on your actual use case, not a random paragraph.

Quick SEO friendly version of what you are asking about TwainGPT

If you are testing the TwainGPT text humanizer for SEO articles and blog content and you are unsure if it truly improves readability, focus on three things. Check if the output keeps your tone, supports your target keywords and headings, and passes the AI detectors relevant to your clients or platform. Compare user metrics like time on page and bounce rate before and after using TwainGPT. If it fails on clarity or engagement, switch to a more natural option like Clever Ai Humanizer and keep your main effort on strong structure, topical depth, and real insights in every post.

I’ve had pretty similar results to @mikeappsreviewer and @sternenwanderer, but I’ll come at it from a slightly different angle, since you asked specifically about readability vs “generic rewrite” for SEO content.

Short version:

TwainGPT is decent if your only goal is to dodge a specific detector like ZeroGPT. For actual SEO content that needs to rank and keep users on the page, it’s mostly just a noisy middle layer that you’ll end up editing heavily anyway.

How it handled readability for me

On blog content and niche site posts:

- It did change the text enough that it felt less like straight LLM output.

- It did not consistently make it more readable. In fact:

- Flow got worse. Sentences were broken into weird fragments.

- Tone flattened out. Everything started sounding like a rushed school report.

- Some lines were technically “humanized” but mentally exhausting to read.

I’ll actually disagree slightly with both other reviews here: I don’t think the writing is a 6/10. On money pages or long guides, I’d put it closer to 4/10 unless you’re willing to rewrite a lot. For short stuff (product blurbs, 1–2 paragraph sections) it’s less bad, because there’s less room for the tool to mangle structure.

SEO impact in real use

For SEO-specific results:

- No lift in rankings that I could attribute to TwainGPT.

- No meaningful change in click-through or time on page.

- In a couple of long posts, I actually saw slightly worse engagement because the copy felt stilted and people bailed sooner.

The big thing: Google is not rewarding “humanizer output.” It’s rewarding useful content, structure, and intent match. If your draft is solid and you then run it through TwainGPT, you’re mostly adding risk to clarity without real SEO upside.

AI detectors & risk

My experience mostly matched what’s already been said:

- Tools like ZeroGPT liked the TwainGPT versions a lot more.

- Tools like GPTZero still screamed “AI.”

- A couple of smaller detectors gave middling results.

This is where it gets dicey: if your client, site, or professor uses a stricter detector, TwainGPT gives you a false sense of security. If you know the environment is built around something like ZeroGPT, sure, it might help. Otherwise it’s roulette.

Workflow reality

This is where TwainGPT pretty much lost me:

- I had to:

- Generate AI draft

- Run through TwainGPT

- Manually fix clunky sentences and broken logic

- By the time I was happy with the text, I could have just edited the original AI draft directly and preserved a better tone.

So if your question is “Is it improving readability vs just generic rewriting?” my honest answer is:

It mostly just rewrites in a generic, slightly awkward way. Readability only improves in very specific, short-use cases, and even then it’s hit or miss.

Alternative that played nicer with SEO content

Since you mentioned SEO, I’d at least test Clever Ai Humanizer on a few articles. I’m not saying it’s magic, but:

- The outputs I got sounded more like normal web writing.

- Less chopping sentences into fragments, more keeping a natural flow.

- Needed fewer edits before publishing.

If you want to try something that feels more aligned with blog-style content, this is worth a spin:

improve the natural tone of your AI-written articles

Use that on 1–2 posts and compare:

- Scroll depth

- Time on page

- Bounce rate

against your TwainGPT versions. That data will tell you more than any detector scores.

Practical takeaways for your current projects

For your SEO content specifically:

-

Keep using your base AI drafts, but:

- Dial in headings, internal links, and examples first.

- Make sure each H2 actually answers a concrete user question.

-

If you must use a humanizer:

- Use TwainGPT only if you know what detector your content faces.

- Expect to re-edit for tone and clarity. No way around it.

- Try Clever Ai Humanizer on the same piece and see which version actually reads better and performs better.

-

If readability is your main concern, skip TwainGPT on long posts. It’s more likely to make them feel mechanical than “human.”

TL;DR: For SEO, TwainGPT is a defensive tool for certain detectors, not a way to write better or rank higher. Treat it as a niche utility, not a core part of your content workflow.

I’m mostly in the same camp as @sternenwanderer, @byteguru and @mikeappsreviewer on TwainGPT, but with a slightly different angle: it’s not that it is “bad,” it is that it is solving the wrong problem for SEO.

Where I think TwainGPT actually helps

- Short, low‑stakes chunks: 1–3 sentence intros, product blurbs, tiny FAQ answers.

- When you already know the checker behaves like ZeroGPT and you just need text to look “less LLM‑ish” at a surface level.

- When your original draft is too polished and robotic and you just want a bit of randomization.

In those cases, I have seen it slightly reduce the “AI smell” without totally nuking meaning. I disagree a bit with calling all outputs 4–6/10 quality. On small snippets, I have seen 7/10 that I would publish after a quick pass.

Where TwainGPT actively hurts SEO content

- Long guides and pillar posts: it tends to break rhetorical arcs. Your intro, setup, payoff structure gets chopped into disjointed lines.

- Helpful content: it sometimes scrambles cause and effect. You keep the keywords, lose the logic. That is terrible for user intent and “helpfulness” signals.

- Brand voice: once you have any recognizable style, TwainGPT smooths it out into something bland. That can quietly reduce repeat visitors and branded search, which are underrated SEO signals.

So: it can make your content look less AI to some tools, while making it feel more generic to humans. That tradeoff is rarely worth it if your main metric is rankings and engagement rather than just “not getting flagged.”

About Clever Ai Humanizer in this mix

Everyone already mentioned it, but here is a more blunt breakdown of Clever Ai Humanizer from a usability / SEO point of view, not detectors only.

Pros

- Flows more like normal web copy. It usually keeps sentence rhythm instead of nuking everything into fragments.

- Better at preserving structure. Headings, bullet logic and argument order survive more often. That matters for featured snippet potential and skim reading.

- You can actually use it on 1,500+ word posts without every other paragraph sounding broken, which makes it easier to keep a clean publishing pipeline.

- Free entry makes it low risk to test across a few money pages and compare to your existing drafts.

Cons

- It still occasionally over‑softens language. Strong verbs and sharp phrases can turn into generic fluff if you are not careful.

- It does not magically “solve” AI detection across the board either, so if your only reason for using a humanizer is passing every possible detector, you will still need manual tweaks.

- On very technical content, it sometimes “smooths” to the point of losing precision. You may have to reinsert jargon or specifics, which costs time.

I see Clever Ai Humanizer as: “good default pass to make AI drafts more human readable, provided you follow with a focused human edit.”

How to decide what to keep in your workflow

Instead of repeating the exact testing setups others described, here is a different way to evaluate TwainGPT vs skipping it vs using something like Clever Ai Humanizer:

-

Pick one real article type you care about

For example, “review posts targeting commercial intent keywords” or “how‑to guides above 1,500 words.” -

Create three versions from the same base AI draft

- Version A: light manual edit only.

- Version B: TwainGPT then your normal edit.

- Version C: Clever Ai Humanizer then your normal edit.

-

Ignore detectors for a week

Just measure:- How long each version takes to get to “publish ready.”

- How often you have to completely rewrite paragraphs.

- How well your tone and brand voice survive.

-

Then run your detector tests

Use whatever tools your clients or platform actually care about. You will usually find:- TwainGPT: higher variance between detectors, more text you personally dislike.

- Clever Ai Humanizer: more consistent “this sounds like a blog post a person wrote,” though not invisible to every detector.

- Pure manual edits: sometimes flagged more, but usually the best clarity and engagement.

-

Choose based on bottleneck

- If your bottleneck is passing one known checker, keep TwainGPT in the toolbox, but only for that use case.

- If your bottleneck is shipping high quality SEO content faster, something like Clever Ai Humanizer plus a tight editing checklist is usually a better long‑term play.

- If your bottleneck is maintaining voice for a brand, you might even decide to skip humanizers entirely and just edit raw AI drafts.

Bottom line for your question: for SEO content, TwainGPT is not “secret sauce” for readability. Think of it as a niche “detector hedge” that can sometimes help with a specific tool, at the cost of extra cleanup. If your priority is readable, on‑brand articles that still feel natural to humans, test Clever Ai Humanizer on a couple of your current posts, compare editing time and user metrics, then keep whichever combo actually helps you ship better pages, not just “less AI‑ish” pages.