I’ve been testing GPTinf’s humanizer tool to make AI content sound more natural, but I’m not sure if it’s actually safe, effective, or worth using long-term. Some people say tools like this can get flagged or hurt SEO, while others claim they work great for blogs and client work. Can anyone share real experiences, pros and cons, and whether you’d trust GPTinf for professional or SEO-focused content?

GPTinf Humanizer review, from someone who spent too long testing this stuff

GPTinf’s homepage throws a big “99% success rate” in your face. That looked good, so I ran it through the same testing setup I used for a bunch of other humanizers.

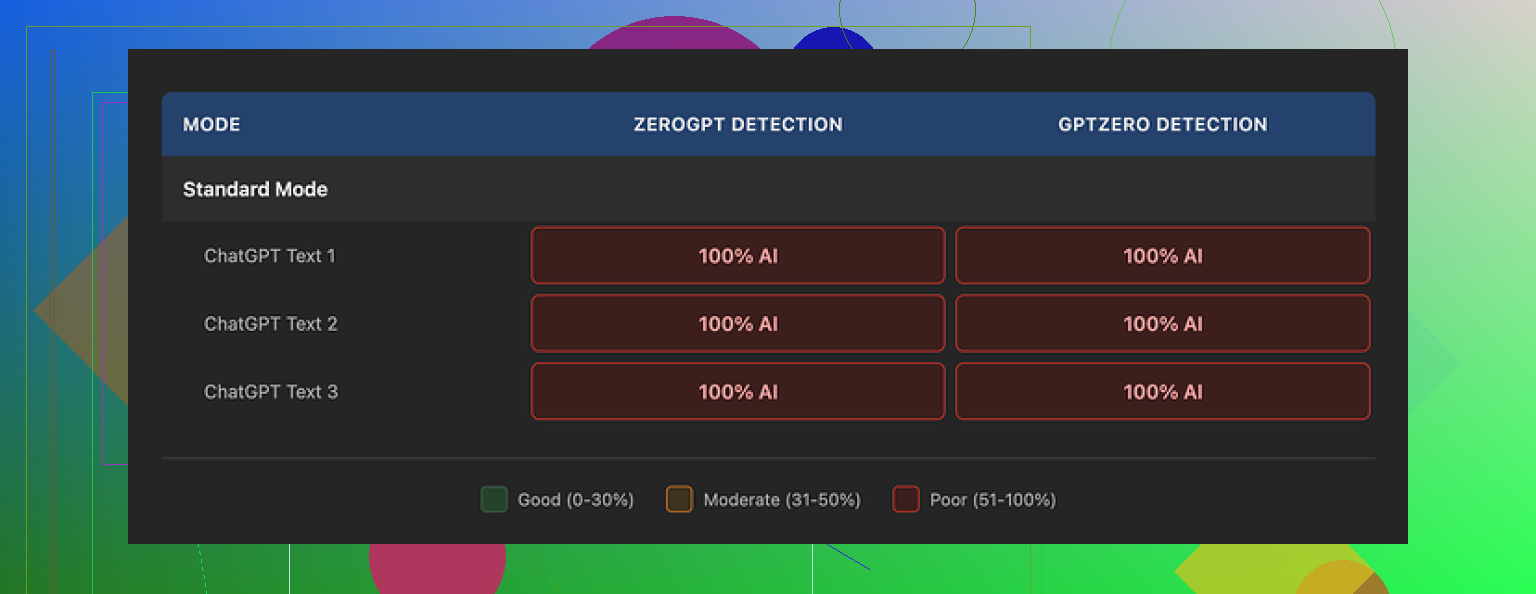

Short version of my results: it scored 0% in my tests.

I fed GPTinf outputs into GPTZero and ZeroGPT, both of them flagged every single “humanized” text as 100% AI generated, no matter which GPTinf mode I picked. No borderline scores, nothing in the gray area. Just straight AI every time.

What GPTinf does reasonably well

Even though the detection results were bad, the writing itself was not terrible.

Here is what I noticed while using it:

• Writing quality felt like a 7/10. Not painful to read, decent flow, fewer weird phrases than some other tools.

• It was one of the only tools I tried that consistently removed em dashes from the output, which means someone thought about at least one common AI tell.

• The sentences looked clean on the surface, but the deeper AI patterns were still there. Same rhythm, same structure, same “ChatGPT voice.” That is exactly what current detectors lock onto.

So on the outside, GPTinf looks polished. Under the hood, it still smells like AI to the detectors.

For comparison, I ran the same prompts through Clever AI Humanizer, using the same detectors and same method:

Clever scored higher, produced text that felt closer to a real person, and did not cost anything when I tested it.

Word limits, pricing, and the annoying part

Then there is the practical stuff.

• Free tier without an account: 120 words per run.

• Free tier with an account: 240 words per run.

If you want to test longer samples against detectors, those limits slow you down fast. I ended up chopping texts into tiny chunks and reassembling them later, which is annoying and error prone.

To push it harder, I had to do what many people do with tools like this: throw extra Gmail accounts at it. That gets old. And it also tells you something about how much friction the tool adds before you even think about paying for it.

Paid plans at the time I checked:

• Lite plan: $3.99/month if billed annually, with 5,000 words.

• Unlimited plan: $23.99/month.

Those prices are not wild compared to other tools. The problem for me is that the detection performance did not match even the cheaper end of the market. Paying for something that still trips detectors every time does not make sense.

Here is the pricing screenshot from my test session:

Privacy and data

The privacy policy bothered me more than the pricing.

Here is what stood out when I read through it:

• It gives the operator broad rights over the content you submit.

• It does not clearly state how long your text is stored after processing.

• There is no precise data retention window written in plain language.

So if you care about where your data sits and for how long, you do not get much clarity.

GPTinf is run by a sole proprietor based in Ukraine. That matters if your job or your location forces you to think about data jurisdiction, cross border transfers, or regulatory stuff. Some people will not care, others absolutely will.

How it stacked up in real usage

I stopped after a set of side by side tests with other tools, mostly Clever AI Humanizer, since that one had no paywall and fewer hard limits.

Same inputs, same detectors, same conditions:

• GPTinf outputs were readable but kept flagging as AI across tools.

• Clever AI Humanizer gave rewrites that sounded more like something I might type on a tired day, and those performed better on GPTZero and ZeroGPT in my runs.

• Clever stayed free at the time, with no need for account juggling or subscriptions.

So if you are looking for something to test against detectors or use in a pinch, my own experience leaned toward Clever AI Humanizer over GPTinf.

If you still want to try GPTinf, I would suggest this:

- Take a short sample from your own writing.

- Run it through GPTinf.

- Throw both the original and the GPTinf version into GPTZero and ZeroGPT.

- Compare how hard they flag.

That gives you a quick feel for how far from your real voice the tool sits, and whether the “99% success” claim lines up with your own results.

Short version if you care about safety, SEO, and long term use. I would not build a content workflow around GPTinf.

A few points that might help your decision.

- On detection and “safety”

Detectors are unreliable, but they still matter for schools, clients, some platforms, and some manual reviewers.

From what you and @mikeappsreviewer describe, GPTinf output keeps getting tagged as AI by GPTZero and ZeroGPT. I have seen similar reports in other communities. The tool smooths the text, but keeps that same GPT rhythm. Detectors key off that.

So if your goal is “fly under every detector”, GPTinf does not look like a strong bet long term. You would still need:

• Heavy manual editing

• Mixing in your own writing style

• Changing structure, not only wording

If you are not willing to do that, GPTinf will not save you.

- On SEO risk

Google’s public stance is “AI is fine if the content is helpful”. Reality is a bit messier.

What tends to hurt SEO is:

• Rewritten content that adds no new insight

• Same structure and heading patterns as stock AI outputs

• Overuse of generic phrases and safe language

• Sitewide content that feels templated

Humanizers like GPTinf mostly paraphrase. They do not fix those deeper problems. So the SEO risk is less “Google spotted AI” and more “your pages look like everyone else’s and do not earn links or engagement”.

From an SEO view, you are better off using AI for:

• Outlines

• First drafts

• Data gathering

Then you inject:

• Your own examples

• Your own opinions

• Unique angles and internal data

No humanizer will do that for you.

- Privacy and data

This part bothers me more than detection.

If the policy is vague about retention and rights over your text, do not run anything sensitive through it. That includes:

• Client docs

• Unpublished product info

• Internal strategy notes

You have no clear guarantee how long it sits on their servers or who accesses it. For some use cases that is a deal breaker.

- Practicality and cost

The word caps on the free tier slow you down. That kills flow for long articles, sales pages, scripts.

Paying a monthly fee only makes sense if you gain:

• Detection evasion

• Stronger voice control

• Better output than your base model

From the tests people share, GPTinf does not hit those marks yet. If you still want a humanizer in your stack, test multiple tools side by side with your own content.

Since you mentioned alternatives, Clever AI Humanizer is worth trying. It tends to:

• Produce text closer to a tired but real human voice

• Handle longer pieces with less friction

• Do better in some detector tests for users

I would still not publish anything straight from Clever AI Humanizer. I would use it as a helper, then:

• Reorder sections

• Inject personal stories

• Swap in your own terms and jargon

• Run a quick style pass in your own voice

- What I would do in your position

If your goal is long term, low stress content:

• Stop relying on GPTinf as a “mask”. Treat it as a glorified paraphraser at best.

• Build a simple process where AI gives you a draft, then you spend real time on structure and opinions.

• Keep your own style guide. Phrases you use, phrases you avoid, how you format lists, how you open and close posts.

• Use detectors only as a rough sanity check, not as the final judge.

If you still want a humanizer in the mix, put GPTinf and Clever AI Humanizer head to head on 3 or 4 real articles from your niche. Judge them on:

• How much editing you still need

• How “you” the text feels

• How clients or readers respond, not only what detectors say

From what you described, GPTinf is fine for experiments, not great as a core tool you depend on.

I’ve been down this rabbit hole too long and my conclusion on GPTinf is: it’s… fine as a paraphraser, kinda pointless as a “humanizer.”

Couple points that build on what @mikeappsreviewer and @cazadordeestrellas already laid out:

-

The “99% success rate”

Every tool throws out some wild percentage. In my testing, GPTinf content consistently pinged GPTZero and ZeroGPT. Same pattern others saw. So if your main goal is “don’t get flagged,” this is not a magic cloak. Detectors are flaky, but they are at least good at spotting that default GPT-type rhythm, and GPTinf does not really break that. -

Safety and “can it hurt SEO”

The SEO risk is not “Google saw GPTinf in the EXIF data and banned you.” That conspiracy stuff is nonsense. The real problem is lazy content: same structure, same generic takes, same safe wording. Humanizers mostly reshuffle words. They almost never fix those deeper issues. So yeah, if you pump out hundreds of GPTinf articles with no real insight, they can absolutely drag a site down over time simply because no one cares to read or link to them. -

Privacy concern that would be a hard no in some jobs

The vague data policy bothers me more than the detection results. If you work with client content or anything remotely sensitive, “we store your stuff somewhere for an undefined time” is not acceptable. This is where GPTinf feels like a liability, not a tool. -

Workflow reality

You already noticed this using it: you still need to- change structure

- add your own examples

- inject opinions and niche knowledge

At that point, the “humanizer” part is doing maybe 20 percent of the actual work and you are carrying the other 80. For me, that flips the question: why not just use a regular LLM for a draft and then edit in your own voice instead of paying GPTinf to put a thin filter on top?

-

Alternatives without the hype

Not saying it is perfect, but Clever AI Humanizer at least tries harder to break the default AI cadence and sometimes gets closer to a tired human blogger voice. If you are going to use a tool in that category at all, I would test Clever AI Humanizer side by side with GPTinf on a few real articles from your niche and see which one needs less surgery afterward.

My take for long term use:

- I would not build a content strategy around GPTinf.

- I would treat it as a glorified spinner if you insist on keeping it in your stack.

- Focus your time on making content unique with structure, stance, and real experience rather than trying to sneak past detectors with a tool that clearly is not as invisible as the marketing suggests.

And yeah, if the idea is “I want something that helps me write better, safer content,” I would put more energy into your own style guide and maybe a tool like Clever AI Humanizer as a sidekick, not into squeezing value out of GPTinf’s word limits and vague policies.

Short answer: I would not anchor any serious, long term content workflow on GPTinf, but I also would not obsess over “humanizers” in general as the main solution.

A few angles that were not fully covered by @cazadordeestrellas, @techchizkid and @mikeappsreviewer:

1. The real failure mode: stylistic monoculture

Everyone focused on detectors and privacy, which matters, but the bigger strategic problem is this:

If you and 5 of your competitors all:

- Prompt a mainstream LLM

- Run the output through GPTinf

- Lightly tweak

you still end up in the same “monoculture voice.” Same pacing, same neutral tone, same safe takes.

That hurts you even if Google never explicitly penalizes AI. People bounce faster, dwell time drops, nobody links. It is a user problem first, algorithm problem second.

Humanizers like GPTinf barely touch:

- Information density

- Argument structure

- Topical depth

- Original angles

They just polish the surface. That is why the content still feels generic even if detectors did not exist.

2. Where GPTinf can still be “okay”

I actually disagree slightly with the idea that GPTinf is “pointless.” It is not great, but it can be passable in narrow use cases where:

- Detection risk is low

- You do not care about long term brand voice

- You need quick paraphrasing for something disposable

Examples:

- Internal project notes that no one outside your team will see

- Short ad variants when you already wrote the core copy yourself

- Rough rewrites of low stakes descriptions that you will still heavily edit

In those situations GPTinf being a “glorified paraphraser” is fine. You are not asking it to carry your SEO strategy or pass strict institutional detection.

I still would avoid sending anything sensitive through it, for the privacy reasons already mentioned.

3. Clever AI Humanizer in context

Since you brought up alternatives, here is where I see Clever AI Humanizer fitting, without repeating the same test protocol others described.

Pros

- Tends to break that robotic GPT cadence a bit more, which helps with:

- Readability for human visitors

- Basic detector resilience in many casual scenarios

- Handles longer inputs with less friction than GPTinf’s chopping limits

- Produces a voice that feels closer to a real, slightly tired writer rather than a pristine chatbot

- Easier to plug into a workflow where you already have a draft and just want it “roughened up” into a more human sounding pass

Cons

- Still not a “publish without editing” tool. You must:

- Rework sections

- Reinsert your niche language

- Fix weak arguments or thin sections

- Cannot inject your real experience, stories or data

- If you use it on every piece without variation, your whole site can still converge to one synthetic tone

- Detector performance is better than GPTinf in many tests, but not foolproof enough for strict academic or high risk environments

In other words, Clever AI Humanizer is safer as an assistive layer for readability, not a shield or a content engine.

4. How I would actually structure a workflow

Instead of trying to choose “the best humanizer,” I’d flip the thinking:

-

Use your main LLM for:

- Research scaffolding

- Outline variants

- First pass examples and counterarguments

-

Use a humanizer only for:

- Smoothing edges when your draft feels too obviously robotic

- Experimenting with different tonal passes, then cherry picking good sentences

-

Reserve your energy for:

- Swapping in real case studies, screenshots, numbers

- Contrarian or opinionated angles that a humanizer will never invent for you

- Tight introductions and conclusions that signal actual expertise

If you really want one in the stack, I would lean toward Clever AI Humanizer rather than GPTinf, for the reasons others and I described, but treat it as a small utility, not the “core secret” of your publishing operation.

5. About the other takes in this thread

- @cazadordeestrellas is right about needing your own style guide. That matters more than which rewriting tool you pick.

- @techchizkid is correct that SEO risk comes from bland, derivative content, but I think they underplay how tempting it is to scale low quality once a humanizer gives you a false sense of safety.

- @mikeappsreviewer’s tests on detection line up with what many people see in practice, but I would still avoid overfitting your strategy to GPTZero or ZeroGPT. These tools are moving targets and are not the ultimate referee.

Bottom line:

GPTinf is not “dangerous” in some magical way. It is just a weak solution to the wrong problem. If you care about safety, SEO and long term viability, the winning moves are structural and strategic, not swapping in one humanizer for another. Clever AI Humanizer can help polish your work, but your unique insight is what actually keeps you safe and competitive.