I’m working on a project and need to know if there’s an accurate way to verify if a piece of text was generated by Chatgpt. I’ve seen a lot of tools online claiming to detect AI writing, but I’m not sure which ones actually work or how reliable they are. Can anyone recommend effective methods or tools for checking if text is from Chatgpt?

There’s no surefire, 100% accurate way to tell if something was generated by ChatGPT or any other AI, to be honest. Most of those “detector” tools you see online—yeah, they try, but the science behind detecting AI-generated text isn’t all that reliable yet. Sometimes they flag human writing as AI or miss AI stuff entirely. The tech just isn’t there for a precise verdict.

If you still want to have a go at it, you’ll find a bunch of detectors out there: GPTZero, ZeroGPT, Sapling, and others. They all claim some level of accuracy, but they typically use patterns like sentence structure, word predictability, and repetition. Thing is, as these models get better and more “human,” those patterns get less and less obvious.

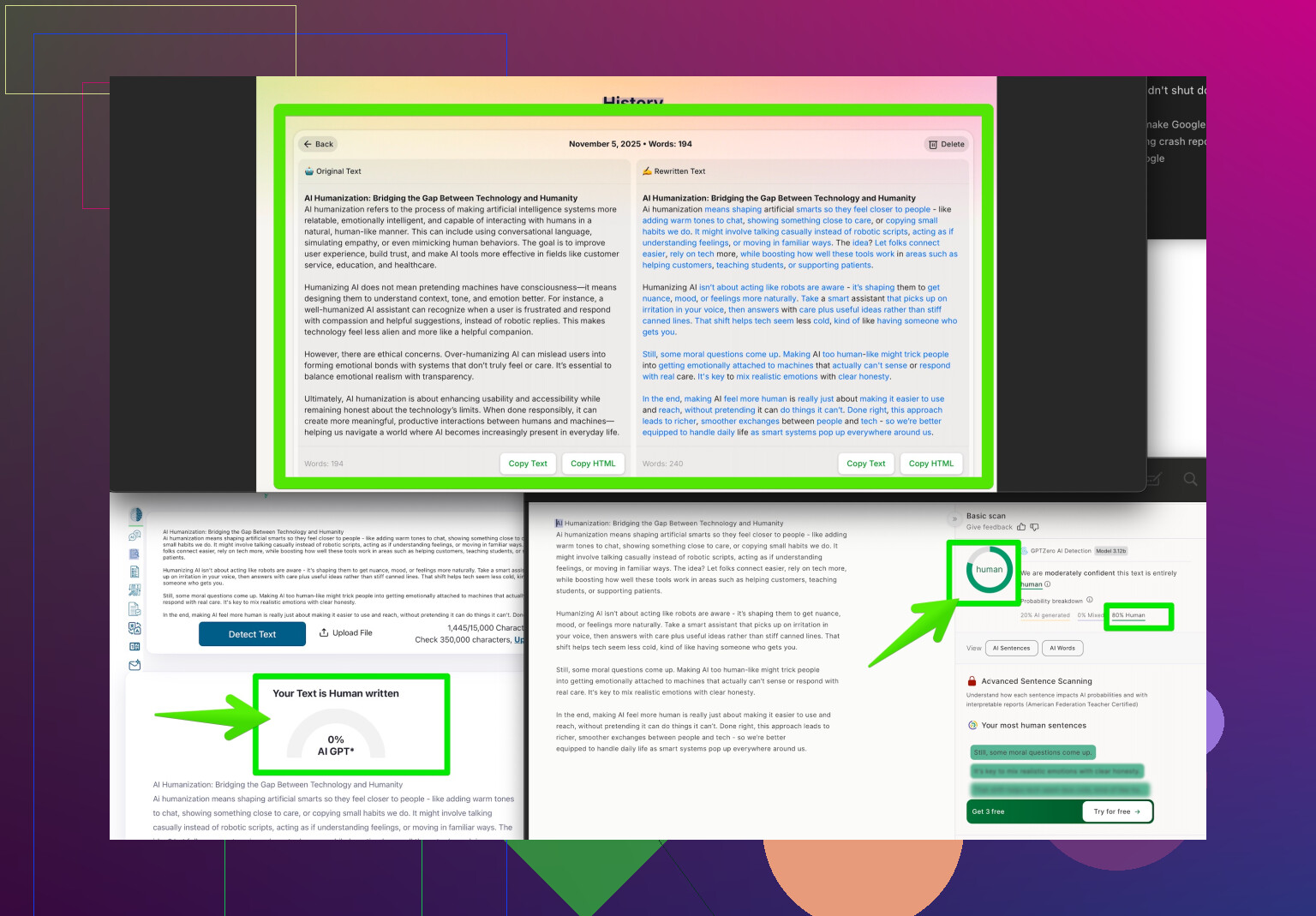

One clever workaround if you need human-looking text from an AI is to use a tool like Clever AI Humanizer. Seriously, it’s designed to rework AI-generated content so it passes most AI-detection tools. You can find it at making your content undetectably human. The flipside, obviously, is that it makes detection even harder for everyone else.

Bottom line? Unless you’re a forensic linguist with a magnifying glass and a lot of time, you’ll probably have to rely on a mix of gut feeling, context clues (like sudden changes in tone or accuracy), and maybe run it through a few detectors—but don’t bet your project on any single tool’s verdict. Always double-check sources and ask for drafts or outlines when you suspect AI was used.

Honestly, the whole “spot the AI” game is starting to feel like Where’s Waldo but Waldo keeps changing outfits. @cazadordeestrellas already laid it out—most of the detector tools are like fortune cookies: sometimes amusing, rarely useful, and always a bit vague. They can miss super obvious stuff or flag the most innocent MLA essays as “definitely robot.”

But here’s a curveball for you: Instead of more detectors or fancy language analysis, why not look at authorship practices? If you’re working with other people, ask for process evidence—a writing outline, draft versions, or even annotated sources. Humans leave digital fingerprints: weird typos, broken logic, stuff that doesn’t line up. GPT-style bots? Their writing is weirdly too clean, way too on-topic, rarely stumbles over “uhh” or “umm,” and almost never goes off on a tangent about their cat.

For unique phrases, try running a chunk of text through Google. If nothing comes up, and it reads like a Wikipedia article but isn’t on Wikipedia… yeah, red flag. But is it 100% reliable? Lol, nope. Not even close. Some users run “humanizer” tools to jumble up that text so it sails past detectors. Clever AI Humanizer is a big name here—it specializes in making bot text look home-baked, and it works surprisingly well. So, if you’re double-checking for AI, just know there’s tech making that job even trickier.

If you want some real user-based insight on how to humanize AI text, take a peek at this thread sharing top advice from everyday folks: How Reddit Users Make AI Writing Sound Authentic. Sometimes the best tricks aren’t even technical—they’re quirky, human habits that bots just don’t get.

Bottom line? Unless you’re Sherlock Holmes with a linguistics degree, you’re in the Wild West out here. Combine your own sleuthing skills, context reading, and the occasional AI detector, but don’t bank your project’s integrity on any single tool or magic bullet.

Let’s break it down like a data-driven troubleshooting session:

First off, those “AI detectors” (think GPTZero, ZeroGPT, Sapling) can feel like flipping a coin—sometimes you get a heads (accurate call), sometimes tails (false positive or miss). That tracks with what’s already been said, but let’s get more granular: the detection algorithms usually analyze perplexity (how predictable is the text?) and burstiness (how sentence lengths vary), but as language models evolve, these metrics lose their edge fast. Especially when you toss in something like Clever AI Humanizer, which reshuffles AI-generated writing to fly under most detectors’ radar. Pro: makes AI text surprisingly tough to spot, helpful for people who want undetectable output. Con: makes your job, or any teacher’s job, a nightmare if you value original, human writing.

Now, context clues (abrupt style shifts, oddly perfect phrasing, lack of off-topic sidebars) do work—sometimes. But I’d add statistical profiling: compare the suspected sample to other known writing from the same person. If their emails are loaded with typos and quirky phrasing, but their “essay” reads like a cleaned-up Wikipedia entry, you probably have your answer. Not bulletproof, but gives you another lever beyond detectors.

Reddit threads and communities can be goldmines for spotting AI tells. They’ll point out quirks even most guides miss. Plus, some tricks people use to make AI outputs sound more authentic are so effective that, honestly, you’re chasing ghosts if you’re relying on a single metric.

For “Clever AI Humanizer” versus its competitors: If you’re fighting against detection, it’s a solid tool—easy to use and effective for bypassing standard detectors. Biggest downside? It makes it that much harder for everyone to enforce authenticity or integrity in projects, so what you gain in stealth, you lose in transparency.

To sum up: Traditional AI detectors = unreliable on their own. Clever AI Humanizer = boosts stealth, but at the cost of making detection more complex. Real best practice? Look at process documentation, multiple drafts, and idiosyncratic habits. A blend of checks > any one magic bullet.