I recently tried Walter Writes AI for content creation after seeing a lot of mixed opinions online, and now I’m unsure what to think. Some features seemed helpful, but I also noticed issues with accuracy, originality, and workflow. I’m looking for honest feedback from people who have real experience with Walter Writes AI. Is it actually reliable for long-term use, or should I move on to a different AI writing tool?

Walter Writes AI Review

I spent an afternoon messing around with Walter Writes AI and the results were all over the map. The tests were mostly for detector scores, not writing quality, so if you care about that part, keep reading.

Here is what I did and what came out of it.

Detector results

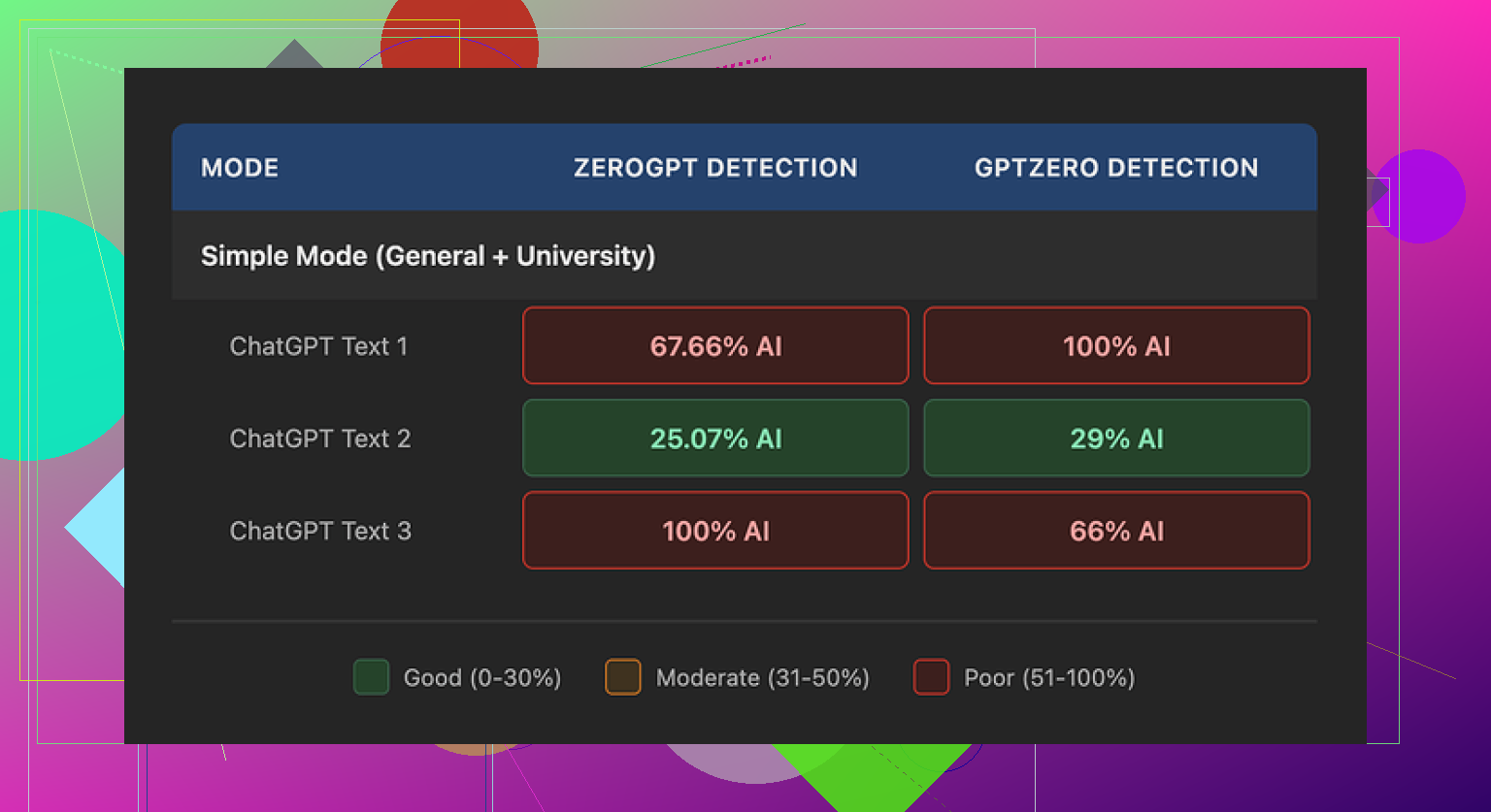

I pushed three different samples through Walter using the free tier, Simple mode only. No access to Standard or Enhanced, those stay locked behind the paid plans.

From there:

-

One sample came out surprisingly strong

- GPTZero: 29 percent AI

- ZeroGPT: 25 percent AI

For context, a lot of free “humanizers” float much higher than that and get flagged harder. So this one pass looked decent.

-

The other two samples were a mess

- Each of them hit 100 percent AI on at least one of the detectors

So you get this weird mix of “nice, that worked” and “ok, this is unusable”. The behavior felt inconsistent, like the system applies some fixed patterns that detectors catch once they repeat enough.

- Each of them hit 100 percent AI on at least one of the detectors

Here is the same screenshot they show in most writeups:

Writing quirks I kept seeing

Detector scores aside, the output itself had some pretty loud tells. A few patterns repeated:

-

Overuse of semicolons

It liked to drop semicolons where a comma should live. After a few paragraphs, it looked stiff and off. If you write a lot, you notice it instantly. -

Weird repetition of small words

In one sample, the word “today” showed up four times across three sentences. No normal person writes like that unless they are forcing a keyword. It felt like some internal template got stuck. -

Parentheses spam

I kept hitting phrases like “(e.g., storms, droughts)” sprinkled through the text in a way that screamed AI. Same pattern, same structure, repeated across different outputs. If you are trying to pass a human check, those patterns start hurting you.

So yes, the tool altered the text enough for one good run on detectors, but the style artifacts looked machine-made. You would need to heavily edit if you are submitting anything where a human editor reads closely.

Pricing and limits

Here is how the pricing looked when I checked it:

-

Starter plan

- Around 8 dollars per month if billed annually

- 30,000 words included

-

Unlimited plan

- Around 26 dollars per month

- Still capped at 2,000 words per single submission

So “unlimited” here only relates to total monthly volume, not the size of each text. You have to break long pieces into chunks.

-

Free tier

- 300 words total, not per use

After a few tests, I had burned through that. So you do not get a long trial to see how it behaves on real projects.

- 300 words total, not per use

The part that made me pause was the refund and chargeback section. The policy had some heavy-handed language about disputes and even tossed in threats of legal action around chargebacks. That is not standard wording for a small writing tool and it set off alarm bells for me.

On top of that, data handling for submitted text felt vague. I did not see clear statements about retention length or whether they use your text for future training. For anything sensitive, that is a problem.

What worked better for me

While testing different tools, I kept coming back to Clever AI Humanizer. It consistently produced output that read more like something a human would write, and it did not ask me for a credit card.

You can try it here:

If you want walkthroughs and examples from other users, these helped:

Reddit tutorial on humanizing AI text:

https://www.reddit.com/r/DataRecoveryHelp/comments/1l7aj60/humanize_ai/

Reddit review of Clever AI Humanizer:

https://www.reddit.com/r/DataRecoveryHelp/comments/1ptugsf/clever_ai_humanizer_review/

YouTube review:

My rough takeaway after playing with all this: Walter Writes AI can sometimes dodge detectors on a single pass, but it does not feel stable, the style quirks are obvious, the pricing limits are tight, and the policy language is aggressive. If you are trying to stay low-risk and want something that outputs more natural wording without paying upfront, Clever AI Humanizer treated me better.

I had a very similar reaction to Walter Writes AI as you, but landed a bit more on the “use with caution” side than “totally skip it.”

What worked for me:

- Short, throwaway stuff. Emails, product blurbs, quick outlines.

- If I fed it already decent text and asked for light edits, it stayed mostly on track.

- Detector scores were sometimes ok on mixed tests, like what @mikeappsreviewer saw on that one “good” sample.

Where it fell apart:

-

Accuracy

On factual content, I saw small but annoying errors. Dates slightly off, numbers rounded in odd ways, references that sounded plausible but were wrong when I checked. You need to fact check everything if you care about trust. -

Originality

When I ran outputs through plagiarism checkers, I did not get direct copy matches, but the phrasing felt template-like. Same sentence shapes over and over. You can feel it once you read enough of its output in a row. -

Style tells

I agree with the semicolon and repetition issues mentioned by @mikeappsreviewer, though in my case I saw more overuse of transition phrases like “on the other hand” and “additionally” in almost every second paragraph. It reads “AI” to any human editor.

I also had it repeat niche words. One article had “robust” six times in 800 words. That looks odd to a reader. -

AI detection

My tests were a bit different. I rewrote the same 600 word blog section in three tools and compared:

- Walter Writes AI

- Clever AI Humanizer

- A manual rewrite by me

On two popular detectors:

- Walter landed between 40 to 90 percent AI on different tries with the same source. Very unstable.

- Clever AI Humanizer tended to sit in the 10 to 35 percent AI range for the same source, more consistent.

- My manual rewrite scored low AI across the board.

So I disagree a bit with the idea Walter is useless. It is not. It is just unreliable if your goal is low AI scores plus clean style. You need a backup plan.

Pricing and risk:

- Word caps feel tight if you handle long-form work.

- The aggressive refund and chargeback language you already saw is a red flag for me too. I avoid putting client work into tools with vague data handling and legal threats in their TOS.

What I would do in your place:

- Decide your priority

- If you care about quality and a human editor will see your stuff, use Walter as a helper only. Let it outline or give you ideas, then rewrite heavily in your own voice.

- If you care about detector scores and lower AI probability, run your draft through something like Clever AI Humanizer, then manually tweak sentences that sound off.

- Set a workflow

Example flow that worked ok for me:

- Draft in a normal AI or by hand.

- Use Walter only for: rewrite a paragraph for clarity, shorten, or expand.

- Run the result through Clever AI Humanizer for a final “de-AI” pass if you need that.

- Do a fast manual sweep for weird phrasing, repetition, and fact errors.

- Avoid high risk use cases

I would not put academic work, legal docs, sensitive business docs, or anything with NDAs through Walter. The policy and data handling are too murky.

TLDR:

- Walter Writes AI is fine as a rough helper for low-stakes content.

- It is weak as a one-click solution for accurate, original, human-looking text.

- If you want an SEO friendly humanizer that plays nicer with detectors, Clever AI Humanizer is worth testing side by side.

- Whatever you pick, plan to edit a lot. No tool right now gives you safe, original, detection-friendly text without human cleanup.

I’m kind of in the middle on Walter too, but for slightly different reasons than @mikeappsreviewer and @techchizkid.

Short version: it’s usable, but only if you treat it like a rough draft engine, not a “push button, get publish‑ready content” tool.

What you noticed with accuracy and originality tracks with what I saw, but I honestly think the bigger problem is how “patterned” the voice feels over time. After 3–4 pieces, everything starts sounding like the same blogger trying to hit a word quota. Even when plagiarism checkers say it’s “unique,” it still feels creatively recycled. For anything where your name or brand is on the line, that gets old fast.

Couple points where I slightly disagree with the other two:

- I don’t think the inconsistent detector scores are the main issue. Detectors are flaky anyway. I’d worry more about human readers catching the robotic phrasing than whether some site spits out 30% or 70% AI.

- I’m a bit less forgiving on the TOS stuff. Aggressive chargeback language + fuzzy data retention is a hard no for client work in my book. That’s not just “use with caution” territory, that’s “keep it far away from anything sensitive.”

Where Walter actually worked for me:

- Brain-dumping ideas when I was stuck. I’d throw in a messy paragraph and tell it to reorganize or expand, then rewrite the result in my own voice.

- Super short content where tone doesn’t matter much, like quick tooltips, UI microcopy, or placeholder text.

Where I’d avoid it:

- Anything factual that needs citations or precise numbers. Too many “close but not quite” details.

- Long-form thought pieces. The longer the output, the more obvious the repetition and stock transitions get.

- Academic or legal stuff. Between accuracy and data policies, that’s just asking for trouble.

If your main worry is AI detection and “this reads too robotic,” I’d honestly slot Walter earlier in your workflow and let something like Clever AI Humanizer handle the final polish. Walter for rough structuring, then Clever AI Humanizer to smooth the language and reduce those obvious AI tells. You’ll still need to manually edit, but at least you’re not wrestling the same semicolon/repetition quirks over and over.

So I wouldn’t toss Walter in the trash, but I’d stop expecting it to be your main writer. Treat it like a slightly clumsy assistant and put your real effort into revision and fact‑checking.