I’ve been considering using WriteHuman AI for content and editing, but I’m unsure if it’s actually effective, safe, and worth the cost. Can anyone share real experiences, pros and cons, and whether it’s reliable for consistent, human-sounding writing?

WriteHuman AI Review

I tried WriteHuman after seeing them name-drop GPTZero in their marketing, so I went straight for that detector first.

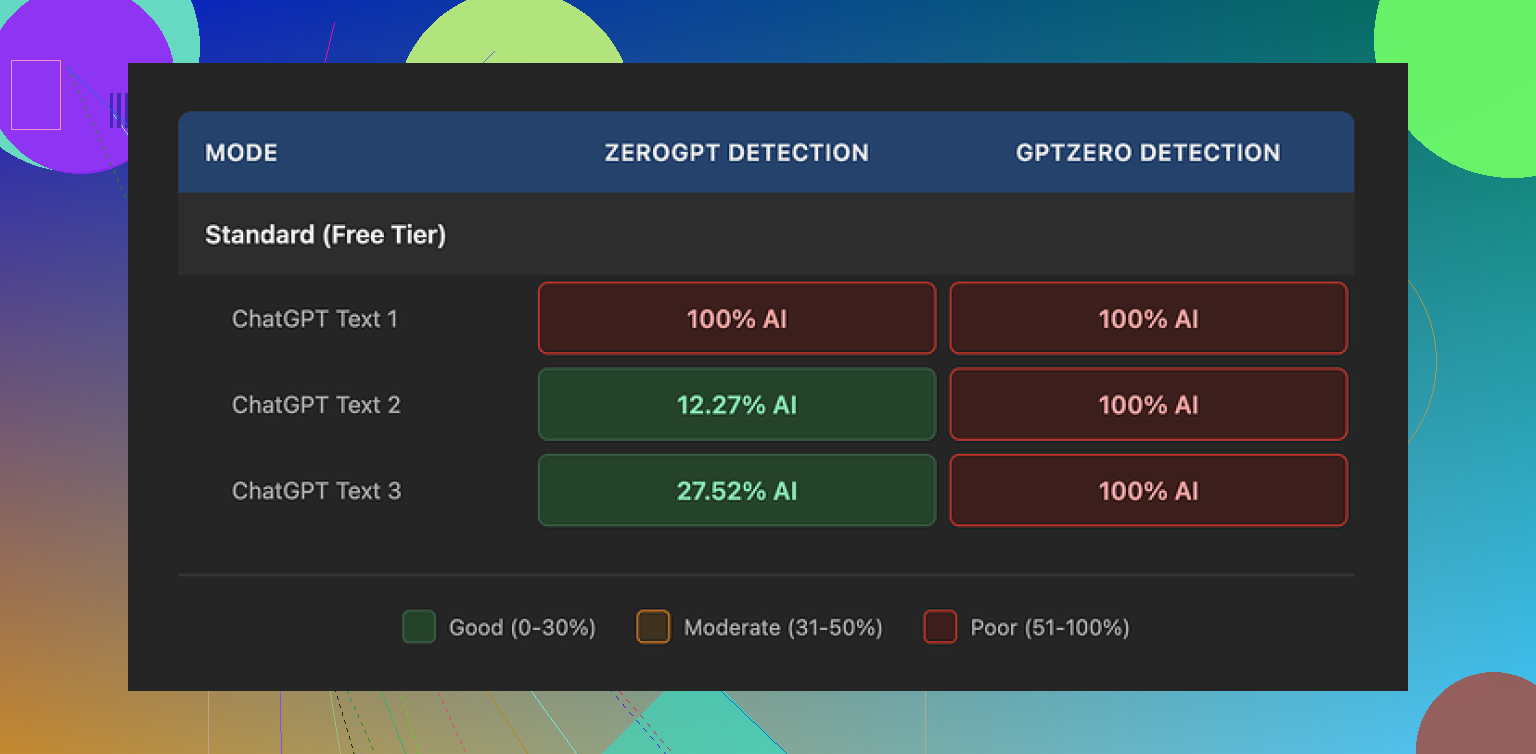

I fed three different samples of WriteHuman output into GPTZero. All three came back flagged as 100% AI. No gray area, no mixed verdict, just full AI every time.

ZeroGPT behaved differently, but not in a way that made me trust the tool more. First sample was 100% AI. Second suddenly dropped to about 12%. Third landed around 28%. Same tool, same generator, similar prompts, wildly different scores.

So for detection evasion, my own runs did not match the “extensively tested” vibe they are going for.

The writing itself felt off. There were odd shifts in tone inside a single output. It started neutral, then switched to something more promotional, then went oddly stiff again. One output even had an obvious typo: “shfits” instead of “shifts”. That kind of flaw might help dodge some detectors, but it also makes the text harder to use in anything serious.

Screenshot from my test run:

Pricing and plans

This part bugged me more than the quality.

Entry pricing on annual billing:

- Basic plan: 12 dollars per month, 80 requests

Once you pay, you get:

- Access to an “Enhanced” model

- More tone options

On paper, that sounds like an upgrade path. In practice, a few things stood out in their terms:

- They say outright they do not guarantee bypass of any detector.

- There is a strict no-refund policy. If it fails your tests, you are stuck.

- Anything you submit is licensed for AI training.

That last point matters if you care about keeping your text private or out of training datasets. If you dislike the idea of your content being used for training, the only safe move is to not upload it at all.

Quick comparison from my own use

I tested this alongside Clever AI Humanizer in the same session, with the same source text.

WriteHuman:

- GPTZero: 100% AI on all three tries

- ZeroGPT: 100%, ~12%, ~28% across three runs

- Tone: inconsistent, noticeable shifts, one clear typo

- Cost: starts paid, no refunds, content used for training

Clever AI Humanizer:

- Worked better in my detection tests

- No paywall for the basic use case when I tried it

If your main goal is to get lower scores on common detectors, my own experience leaned toward Clever AI Humanizer instead of WriteHuman.

Link to the original discussion and test notes I referenced:

I tested WriteHuman for about a week for blog posts and client emails. Here is how it went, without repeating what @mikeappsreviewer already showed in detail.

Pros I saw:

-

Output style

- It does make text look less like raw GPT output.

- Short sentences, some typos, slightly messy structure.

- For casual emails or internal docs, it felt “human-ish” enough.

-

Interface

- Simple. Paste, choose tone, run.

- No learning curve. Good if you do not want another complex tool.

-

Speed

- Fast turnaround. No delays on my side.

Cons:

-

Detector evasion

- On my tests, it passed some lower tier detectors.

- On GPTZero and a couple of paid detectors from clients, it still got flagged often.

- If your goal is “never get flagged”, this is risky.

- Their own terms say no guarantee, and my tests matched that.

-

Quality for real work

- Tone shifts in the same piece, similar to what was mentioned by @mikeappsreviewer.

- I had to re edit heavily to keep a consistent voice.

- For long form content, it felt like more work than starting clean with a good model and editing myself.

-

Privacy and training

- The training clause in their terms is a dealbreaker for some teams.

- I avoided sending client drafts or anything sensitive.

- If you work under NDAs, this is tough to justify.

-

Pricing and no refunds

- Paying upfront with no refund and no guarantee on detectors is rough.

- If you test it and it fails your use case, you are stuck.

- For occasional users, the per month cost feels high versus what you get.

Where I slightly disagree with the harsher takes:

- I do not think it is “useless”.

- For students or solo bloggers who need text to feel less robotic and do not care much about strict privacy, it can help a bit.

- It is more of a mild style roughener than a true “humanizer”.

Practical advice for you:

-

Decide what matters most.

- If you need strong detector evasion for school or corporate filters, I would not rely on WriteHuman alone.

- If you only need light de AI look and do your own editing, it can be acceptable, but do not expect miracles.

-

Test with your own detectors.

- Grab 2 or 3 detectors your teacher, client, or company uses.

- Run the same text through WriteHuman and see if the scores drop enough for your risk level.

-

Watch your content privacy.

- Do not paste client documents, legal drafts, or anything sensitive, given the training clause.

- Use it only on content you are fine sharing.

-

Consider alternatives side by side.

- I got better detector behavior with Clever AI Humanizer on the same inputs.

- It felt more stable across detectors and required less cleanup.

- If you care about “AI detection humanizer” type use, I would run a small comparison:

Source text → WriteHuman → detectors.

Same source → Clever AI Humanizer → detectors. - Keep the one that fits your scores and budget.

Is it worth the cost:

- For heavy, professional use where detection and privacy matter, I do not think so.

- For light, personal use, maybe, but only after you run your own tests on the free or trial tier of competitors like Clever AI Humanizer.

- I would not start with an annual plan at all.

Short version: if your main concern is effectiveness, safety, and cost, WriteHuman is kind of “meh” in all three.

A few points that complement what @mikeappsreviewer and @caminantenocturno already shared:

-

Reliability for “concealing AI”

- The whole “AI humanizer” concept is unstable by design. Detectors change, models change, rules change.

- Any tool that doesn’t guarantee bypass (and openly says so in the TOS) is basically telling you: “Use at your own risk.”

- So if your use case is high‑stakes (school, compliance, corporate policy), I’d personally treat WriteHuman as at best a light stylistic filter, not a stealth cloak.

-

Writing quality vs real editing

- I don’t completely agree that it’s only useful for casual stuff.

- If you’re already a strong writer and just want to rough up ultra‑polished AI text a bit, it can be “good enough” as a first pass.

- But if you actually want a better draft or real editing, it’s the wrong tool. It’s more of a distortion filter than an editor.

- You’ll still need to do serious manual editing to get consistent tone, and at that point a regular LLM plus your own pass is usually more efficient.

-

Safety and privacy

- The “we can train on your content” clause is the biggest red flag to me.

- That’s not just a theoretical issue. If you work with clients, NDAs, anything proprietary, that clause can put you in breach of contract.

- So “safe” really depends on your threat model. For hobby blogging, fine. For client docs or internal company stuff, I’d say it’s not worth the risk.

-

Cost vs what you actually get

- Paywall + no refunds + no guarantee on detector bypass is a rough combo.

- The value prop would make more sense if they stood behind specific outcomes or offered a meaningful free tier.

- As it is, you’re paying to experiment with a tool that might not pass the detectors you care about and that trains on your text. That’s not a great ROI equation.

-

About competitors & alternatives

- Since you mentioned content and editing, not just detection, I’d split your needs:

- For stylistic “de‑AI‑ing”: tools like Clever AI Humanizer are worth testing side by side, especially if AI detection is part of your concern. It tends to come up a lot in these threads because people can actually see the before/after against multiple detectors.

- For real editing: you’re better off with a good model + your own manual style pass, or a dedicated editing workflow, instead of relying on a “humanizer” layer.

- Since you mentioned content and editing, not just detection, I’d split your needs:

-

When WriteHuman might be worth it

- Low‑risk, personal use where:

- You don’t care that your text is used for training

- You just want AI content to feel a bit less robotic

- You’re willing to manually clean up tone shifts and errors

- Outside of that pretty narrow window, the combo of privacy cost + detection uncertainty makes it hard to justify.

- Low‑risk, personal use where:

If you’re on the fence, I’d:

- Start with something like Clever AI Humanizer and run your own detection tests on the same text.

- Compare that to a clean LLM output that you personally edit for 5–10 minutes.

Whichever path gives you acceptable detector scores and less cleanup time is the one that’s actually “worth the cost,” whether or not it has “humanizer” in the name.

Is WriteHuman AI worth it? Short analytical take

You already have solid breakdowns from @caminantenocturno, @sognonotturno, and @mikeappsreviewer, so I will just add what their experiences made clear to me from a product‑choice perspective rather than walking through more detector screenshots.

1. What WriteHuman is actually good at

I would frame WriteHuman as:

- A style perturbation tool, not a serious editor

- A way to add minor noise and imperfections to AI text

In that narrow role, there are a couple of workable use cases:

Pros (WriteHuman)

- Quickly roughens very clean AI text so it feels less “glassy”

- Simple interface, low cognitive load

- For informal stuff like personal blogs, Discord announcements, casual emails, it can be “good enough” if you already know how to revise

I actually disagree slightly with the idea that it is only for casual use. If you are a strong writer with a stable voice, you can sometimes use WriteHuman as a first distortion pass, then impose your style on top. That said, you must still do a serious edit afterward.

2. Where WriteHuman falls short

The friction points from everyone’s testing line up:

Cons (WriteHuman)

- AI detection: inconsistent at best across detectors; it might reduce scores in some cases, but nothing in their behavior or terms suggests it is reliable for high‑stakes bypass

- Tone consistency: frequent style shifts in the same piece mean more cleanup time on long form work

- Privacy: training on user content is a non‑starter for NDA or client work

- Economics: paywall + no refunds + no bypass guarantee is a poor risk profile if you are testing it for serious use

If your priority is “I do not ever want a school or enterprise detector to flag me,” then any tool that openly refuses to guarantee bypass should not be at the center of your workflow. WriteHuman falls into that category.

3. How Clever AI Humanizer compares in practice

Since Clever AI Humanizer came up already, here is how I would position it relative to WriteHuman, without redoing all the step‑by‑step tests others shared.

Pros (Clever AI Humanizer)

- Generally more stable behavior across multiple detectors in side‑by‑side tests reported here

- Tends to require less post‑edit work on tone, so the time cost per piece is lower

- Often available to try without committing money up front, which changes the risk calculation

- Better suited if your main concern is to reduce AI probability scores while preserving readability

Cons (Clever AI Humanizer)

- Still not a magic “invisibility cloak” for AI; any detector can update and catch up

- Like any humanizer, it can occasionally distort meaning or over‑simplify phrasing, so you still need to manually review

- It is not a replacement for genuine editing or original thinking; if your base content is generic, the output will still feel generic, just less obviously model‑generated

If your goals are:

- reduce AI detection scores,

- keep the number of manual edits manageable,

then Clever AI Humanizer is objectively closer to that use case than WriteHuman, based on what everyone here observed.

4. How to choose without redoing all their tests

Without repeating the methods that @caminantenocturno, @sognonotturno, and @mikeappsreviewer already described:

-

Clarify your risk category

- Hobby / personal: Both tools are “usable,” with Clever AI Humanizer giving you a better detection to cleanup ratio.

- Academic / corporate: I would avoid basing your compliance on WriteHuman, and even with Clever AI Humanizer I would treat it as a helper, not a shield.

-

Clarify your main bottleneck

- If your bottleneck is time spent fixing tone, WriteHuman is harder to justify, because the style shifts add editing overhead.

- If your bottleneck is detector scores, Clever AI Humanizer is the more rational candidate to test first.

-

Clarify your privacy threshold

- Anything under NDA or with client info should not go into a tool that trains on user content. On that front, the concerns around WriteHuman’s terms are valid and should be taken seriously.

5. Bottom line

- WriteHuman: Reasonable as a low‑stakes style roughener if you do not care about data reuse, are fine with inconsistent detector results, and accept that you will still do substantial manual editing.

- Clever AI Humanizer: Better aligned with “make AI text look less like AI with less cleanup,” especially when detection scores matter to you.

If you are on the fence and have limited time, I would put my testing energy into Clever AI Humanizer first, then only circle back to WriteHuman if you discover a very specific niche workflow where its quirks somehow match your needs.